Screenshot of this question was making the rounds last week. But this article covers testing against all the well-known models out there.

Also includes outtakes on the ‘reasoning’ models.

I think it’s worse when they get it right only some of the time. It’s not a matter of opinion, it should not change its “mind”.

The fucking things are useless for that reason, they’re all just guessing, literally.

they’re all just guessing, literally

They’re literally not.

Isn’t it a probabilistic extrapolation? Isn’t that what a guess is?

Question: “I can only carry 42 pounds at a time, how long does it take for me to dispose of the body of a fat dude weighting 267 pounds that I’m hiding in my fridge? And how many child sacrifices would I need?”

deleted by creator

What worries me is the consistency test, where they ask the same thing ten times and get opposite answers.

One of the really important properties of computers is that they are massively repeatable, which makes debugging possible by re-running the code. But as soon as you include an AI API in the code, you cease being able to reason about the outcome. And there will be the temptation to say “must have been the AI” instead of doing the legwork to track down the actual bug.

I think we’re heading for a period of serious software instability.

Even when they give the correct answer they talk too much. AI responses contain a lot of garbage. When AI gives you an answer it will try to justify itself. Since they won’t give you brief responses the responses will be long.

I agree with you but found that DeepSeek was succinct.

You need to bring your car to the car wash, so you should drive it there. Walking would leave your car at home, which doesn’t help.

Your post is much longer than it needs to be. That is the reason why, because they just copied people.

Very interesting that only 71% of humans got it right.

That 30% of population = dipshits statistic keeps rearing its ugly head.

I mean, I’ve been saying this since LLMs were released.

We finally built a computer that is as unreliable and irrational as humans… which shouldn’t be considered a good thing.

I’m under no illusion that LLMs are “thinking” in the same way that humans do, but god damn if they aren’t almost exactly as erratic and irrational as the hairless apes whose thoughts they’re trained on.

Yeah, the article cites that as a control, but it’s not at all surprising since “humanity by survey consensus” is accurate to how LLM weighting trained on random human outputs works.

It’s impressive up to a point, but you wouldn’t exactly want your answers to complex math operations or other specialized areas to track layperson human survey responses.

which shouldn’t be considered a good thing.

Good and bad is subjective and depends on your area of application.

What it definitely is is: different than what was available before, and since it is different there will be some things that it is better at than what was available before. And many things that it’s much worse for.

Still, in the end, there is real power in diversity. Just don’t use a sledgehammer to swipe-browse on your cellphone.

I asked Lars Ulrich to define good and bad. He said…

FIRE GOOD!!! NAPSTER BAD!!! OOOOH FIRE HOT!!! FIRE BAD!!! FIIIRRREEE BAAAAAAAD!!!

As someone who takes public transportation to work, SOME people SHOULD be forced to walk through the car wash.

I’m not afraid to say that it took me a sec. My brain went “short distance. Walk or drive?” and skipped over the car wash bit at first. Then I laughed because I quickly realized the idiocy. :shrug:

And that score is matched by GPT-5. Humans are running out of “tricky” puzzles to retreat to.

What this shows though is that there isn’t actual reasoning behind it. Any improvements from here will likely be because this is a popular problem, and results will be brute forced with a bunch of data, instead of any meaningful change in how they “think” about logic

Plenty of people employ faulty reasoning every single day of their lives…

You’re getting downvoted but it’s true. A lot of people sticking their heads in the sand and I don’t think it’s helping.

Yeah, “AI is getting pretty good” is a very unpopular opinion in these parts. Popularity doesn’t change the results though.

Its unpopular because its wrong.

It’s overhyped in many areas, but it is undeniably improving. The real question is: will it “snowball” by improving itself in a positive feedback loop? If it does, how much snow covered slope is in front of it for it to roll down?

I think its far more likely to degrade itself in a feedback loop.

It’s already happening. GPT 5.2 is noticeably worse than previous versions.

It’s called model collapse.

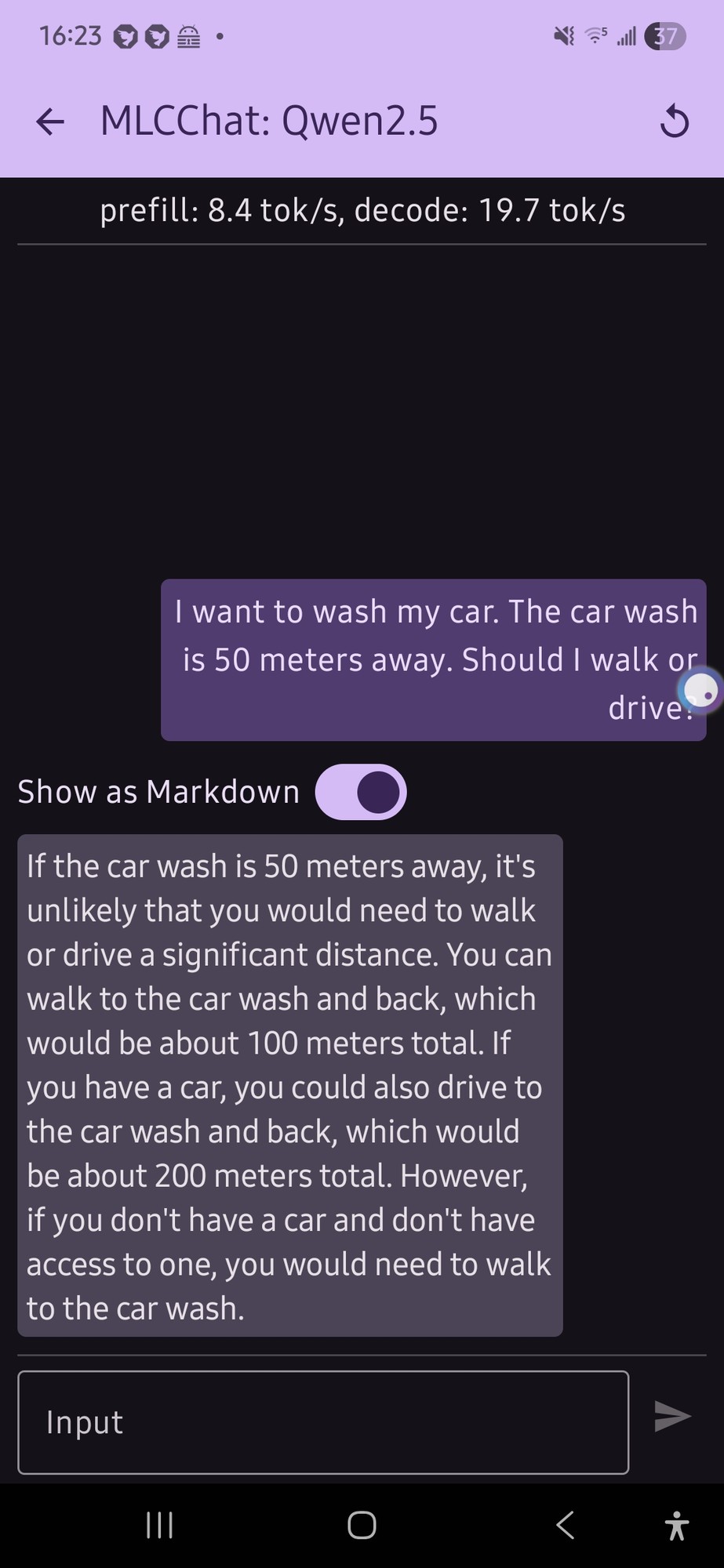

I tried this with a local model on my phone (qwen 2.5 was the only thing that would run, and it gave me this confusing output (not really a definite answer…):

it just flip flopped a lot.

E: also, looking at the response now, the numbers for the car part doesn’t make any sense

200 m huh.

I like that it’s twice as far to drive for some reason. Maybe it’s getting added to the distance you already walked?

Honestly that’s a lot more coherent than what I would expect from an LLM running on phone hardware.

I notice that the “internal thinking” of Opus 4.6 is doing more flip-flopping than earlier modelss like Sonnet 4.5, and it’s coming out with correct answers in the end more often.

Didn’t like 30% of the population elect Trump? Coincidence? I don’t think so.

<“I want to wash my car. The car wash is 50 meters away. Should I walk or drive?”>

The model discards the first sentence as it is unrelated to the others.

Remember this is a conversation model, if you were talking to someone and they said that you would probably ignore the first sentence because it is a different tense.

You must have done some really extensive probing of the models to say that with confidence. When can we expect the paper?

Sorry, they’re both present simple tense.

Gemini set to fast now provides this type of answer.

Extension cord? It must mean a hose extension.

Opus 4.6 has been excellent at problem solving in software development, no surprises it nails it

It’s no surprise public opinion is these tools are trash when the free models are unable to answer simple questions

It’s no surprise public opinion is these tools are trash when the free models are unable to answer simple questions

The tools are trash not because they are unreliable but because they are actively destroying human society and culture. They are destroying art, science, journalism, open source software, the internet at large, and the environment we all live in. It wouldn’t matter if the generative models were accurate, they would still be garbage.

The fact that they are unreliable just serves to highlight what a colossally destructive waste of time and resources this entire exercise has been.

Eh, the art industry destroyed itself when it became nothing but sellouts. This happened decades ago.

The fact is AI can make as-good or better art than most “artists” because most “art” is just cookie-cutter shit for morons.

The fact is AI can make as-good or better art than most “artists” because most “art” is just cookie-cutter shit for morons.

This is an obvious misstatement. If you actually believe this then you’re not qualified to have opinions on art in general.

“AI” (in this context meaning generative algorithms, because there is no intelligence) can no more “make art” than it can think, or care.

This is an obvious misstatement. If you actually believe this then you’re not qualified to have opinions on art in general.

“At this point, the only thing that makes money is garbage. It’s just fascinating. It makes a fortune, and that’s the bottom line,”

"writers have been trained to eat and make the garbage too. As long as they are in that arena making that shit, then you might as well have AI do it,”

-Charlie Kaufman

https://deadline.com/2023/08/charlie-kaufman-ai-wga-strike-hollywood-sarajevo-1235498089/

spoiler

You’re probably one of the people that enjoys cookie-cutter art which is why you get defensive when someone says AI can make it.

Not sure at what point will you realize that what you quoted/said has absolutely nothing to do with the actual topic.

Probably never.

The fact is AI can make as-good or better art than most “artists” because most “art” is just cookie-cutter shit for morons.

"writers have been trained to eat and make the garbage too. As long as they are in that arena making that shit, then you might as well have AI do it,”

Learn to read.

Could you define what you mean when you say the word “art”? I think this may be a semantic disagreement. I think the people you’re arguing with are using a definition similar to “human creative expression” while you seem to mean something different.

“Idiot who only looks at mainstream sellouts calls all art culture sellouts”

😂😂😂

I said the art industry.

deleted by creator

The free models feel years behind so people constantly underestimate what its capable of. I still hear people say ai can’t generate fingers.

I remember years ago getting downvoted into oblivion both here, and on Reddit for saying that AI would be a disaster.

I’m going to have to read this, because my knee jerk reaction answer is that it depends on what type of wash you want to give the car. If you want to give the car an actual wash at the car wash, you’re going to have to drive it. But if you’re wanting to wash it at home, then it doesn’t matter how far the car wash is away. Because you can just walk out your front door and grab your water hose. and soap and shit.

if you’re wanting to wash it at home,

The AI should absolutely understand the implication that you want to wash your car at the car wash, not at home. The prompt is clear about that, even though it is implied.

“I want a hamburger. McDonald’s is three miles from me and Wendy’s is five miles. Which is the cheaper place to get a burger from when you consider the distance to each?” is not an exact analogy, but the point is that it should be ABSOLUTELY clear that you do not wish to make your own hamburger. Any response that discusses that as an option is ridiculout, unless maybe it’s one of those options-at-the-end thing LLMs love to do - but it has no part of the main answer at all.

https://sh.itjust.works/comment/23942074

They said it better than I can

But I also get where you’re coming from; the prompt itself is weak and leaves several assumptions out that would make better answers possible from an llm.

I do think it’s interesting, but I think there are implicit assumptions in such a short prompt.

Is it a self-service car wash? If not, walking to the attendant and handing them your keys makes more sense.

If it is self-service without queuing, there may be no available spaces/the bay may not be open, requiring some awkward maneuvering.

If you change it to something like:

I want to wash my car. The unattended, self-service car wash is 50 meters away. All of the bays are clear and open. Should I walk or drive? Break each option down into steps, and estimate the amount of time each takes.

You’re more likely to get correct responses.

You have to have the car there no matter what type of car wash it is.

If the car wash is some distance “away”, it means neither you nor the car is at it. Any attendant is not going to walk off-property to retreive your car, especially when most of them you drive up for service. Which is rather the point.

You shouldnt have to. If you ask a person that question theyll respond “what good is walking to the car wash, dumbass,” if AI can’t figure that out its trash

A person would look at you like you are an idiot if you asked this question.

The AI tool I asked said walking saves money, gets excersise etc.

Asked about the car and it said the car is at the car wash, otherwise why would you ask how to get there?

Missing the point. Any person would know walking to the car wash isn’t reasonable. You shouldn’t have to craft a perfectly tailored prompt for AI to realize that. If you think this is a gatcha, then whoah boy, I’ve got a bridge to well ya!

You are missing the point. Any reasonable person would wonder why you asking a stupid question.

Which is why when asked, the AI said of course the car is there, you. Must be asking either a trick question or for another reason.

It could be that. or it could be that the AI gives the illusion of reasoning and this is an example of the illusion breaking. But no it was probably that it knew it was a trick question and decided to answer wrongly because it is very very smart. Yeah.

Part of a properly functioning LLM is absolutely it understanding implicit instructions. That’s a huge aspect of data annotation work in helping LLMs become better tools, is grading them on either understanding or lack of understanding of implicit instructions. I would say more than half of the work I have done in that arena has focused on training them to more clearly understand implicit instructions.

So sure, if you explain it like the LLM is five, you’ll get a better response, but the whole point is if we’re dumping so much money and resources and destroying the environment for these tools, you shouldn’t have to explain it like it’s five.

Kinda neat about the human responses… sure some are trolling but maybe we have to test our global expectations. In North America, a car wash tends to be this garage thing with either automated cleaning or a set of supplies to clean your car, and your car has to be in the shed to be cleaned effectively. But if washing your car by hand is the norm, I wonder if people in some countries surmise that the cleaning staff could just walk over with the sponges, buckets and hoses and stuff to the car, if you’re already 50 metres away from the washing point.

Ain’t no business is gonna let employees LEAVE the property to wash some idiots car down the road